GSA SER Verified Lists Vs Scraping

The Eternal Debate in Automated Link Building

In the world of GSA Search Engine Ranker, few decisions have as much impact on your campaign’s success as where you get your targets. The central argument almost always circles back to one question: GSA SER verified lists vs scraping. Marketers often find themselves caught between the convenience of a pre-built list and the raw potential of harvesting targets directly from the web. Understanding the fundamental differences, the trade-offs in quality, and the long-term effects on your backlink profile can mean the difference between a healthy tiered network and a domain saturated with dead links. This article breaks down both approaches, revealing why the choice is never as simple as plug-and-play.

What Are GSA SER Verified Lists?

A verified list is a curated collection of URLs that have been confirmed to accept submissions from automated tools like GSA SER. These lists are typically compiled by experienced users who run massive identification campaigns, filtering out sites that are offline, have broken form fields, or use advanced protection. The core selling point is that every entry has already been tested. When you load a verified list into GSA SER, the software bypasses the uncertain stage of searching and moves directly to registration and posting. This means your projects start producing links almost immediately instead of spending days parsing search engines.

High-quality verified lists are often sorted by platform type—WordPress, Joomla, Drupal, forum profiles, trackbacks—and sometimes segmented by metrics like Domain Authority or spam score. Because the list has been pre-screened, you avoid wasting bandwidth and threads on URLs that will never return a successful submission. However, these lists quickly age. Platforms clean house, delete spam logs, or implement new filter plugins. A fresh verified list might deliver a 70% success rate, but that number plummets over weeks or months as sites vanish or upgrade their defenses.

The Scraping Approach: Building Your Own Targets

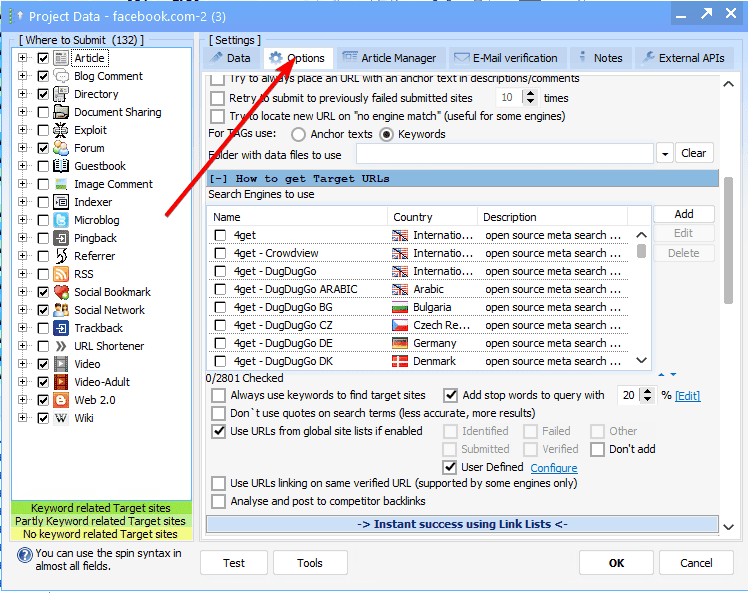

Scraping is the process of harvesting target URLs directly from search engines in real time. GSA SER has built-in capabilities to query Google, Bing, and other sources using footprints—search strings that look for platform-specific identifiers such as “Powered by WordPress†or “registration URL†patterns. Unlike a static list, scraping generates a fresh pipeline of domains every time you run a project. There is no reliance on a third party’s definition of what a good target looks like. Your campaign can be tuned to scrape aggressively for newly indexed sites, languages, or niche-specific keywords.

The biggest advantage of scraping is the discovery of completely untouched targets. While everyone else is hammering the same shared verified list, your scraped targets may have never seen a single automated submission. This reduces the immediate footprint and gives you a window of low competition. Scraping also allows for endless scaling—you can throw more proxies and threads at the process to widen your net. The downside is that it’s resource-intensive. Scraping consumes proxy bandwidth, CPU cycles, and time for verification before a single link is built. A poorly tuned scraping setup can also trip search engine captchas or produce thousands of irrelevant URLs that gum up your queue.

GSA SER Verified Lists vs Scraping: The Core Collision

When you put GSA SER verified lists vs scraping side by side, the distinction is really about control versus efficiency. Verified lists offer a shortcut through the most tedious part of link building: finding a needle in a haystack. Scraping hands you the reins but demands that you master footprints, proxy management, and filtering logic to avoid wasting resources. To make the right call, you have to examine the battleground on four key metrics.

Quality and Success Rate

A freshly updated verified list from a reputable seller often boasts an initial verified rate upwards of 80–90%. However, that number is a snapshot in time. By the time the list reaches your hands, some percentage of those targets are already dead. In contrast, scraping produces a live success rate that depends entirely on your footprint quality. If you scrape responsibly with precise footprints and aggressive on-the-fly checks, your verification rate can match or exceed a stale verified list. Moreover, self-scraped targets are naturally filtered for relevance if you use niche-specific keywords. Verified list providers rarely offer that level of thematic curation, meaning you might build links on a fashion blog for a casino site—fine for Tiers 2 or 3, but not ideal for anything close to a money page.

Time, Effort, and Learning Curve

A beginner can buy a verified list, import it into GSA SER, and see links rolling in within an hour. The effort is close to zero. Scraping, on the other hand, requires a solid understanding of search engine footprints, the patience to let harvesting run for hours before building begins, and the ability to troubleshoot captcha fails. For quick test projects or when you’re setting up a fresh VPS at 2 AM, verified lists are undeniably easier. Over time, however, the time you invest in perfecting your scraping setup pays off because you never need to spend another dollar or wait for a list update. The process becomes autonomous.

Scalability and Resource Allocation

Verified lists scale by volume: you can buy a package with millions of URLs and saturate GSA SER’s threads. But you’re limited to what’s in the file. If your project demands 500,000 new targets a day for months, you’ll need an endless supply of purchased lists, which gets expensive. Scraping is the only truly infinite source. You can keep feeding your campaigns with new URLs as long as search engines exist and your proxies hold out. The trade-off is cost in proxy traffic. Scraping can burn through gigabytes of data daily, while verified lists sit quietly on your hard drive consuming no bandwidth until the posting phase. If you are on a metered connection or tight proxy budget, the math flips toward lists.

Footprint Diversity and Safety

Any verified list that has been circulated – especially free ones – suffers from overuse. Thousands of users submitting to the exact same URL pattern creates a clearly identifiable footprint. Search engines and platform owners can blacklist those domains more easily. A scraped list, particularly if you’re using custom footprints and non-Google engines, introduces far more entropy. You’ll hit obscure domains that have never been targeted by GSA SER before, making it harder for automated spam filters to fingerprint your activity. This diversity is crucial if you are building tier-2 links that point directly to tier-1 properties you care about. The shared nature of verified lists makes them riskier for anything other than deep-tier noise.

When Verified Lists Are the Unquestionable Winner

If you need to pump out a high volume of links for a tier-3 layer whose only purpose is to index and pass some random juice, a bulk verified list is perfect. You won’t worry about link neighborhood or relevance because those links serve as raw fuel. Projects with a short lifespan, like a churn-and-burn affiliate site that will be gone in six weeks, also benefit from the instant gratification of a list. Finally, if you are new to GSA SER and still learning about captcha solving and thread optimization, starting with a verified list lets you isolate one variable. You can get successful submissions immediately and then learn the scraping side once you understand how SER processes that data.

When Scraping Dominates Every Other Option

Scraping becomes non-negotiable when you’re building links to any tier that touches a money site. The freshness and uniqueness of scraped targets directly translate to a more natural footprint. Long-term white-hat projects, even if you’re using GSA SER in a gray-hat tiered configuration, demand the originality that only scraping can provide. You also lean into scraping when your niche is extremely narrow. A verified list will never target only “outdoor survival blogs,†but custom footprints with “survival forum powered by vBulletin†will. Scraping is also your only option if you’re running GSA SER on a large server farm and need a truly bottomless well of targets without a recurrent invoice for list updates. Once your scraping engine is tuned, it is self-sustaining.

The Hybrid Model That Wins Campaigns

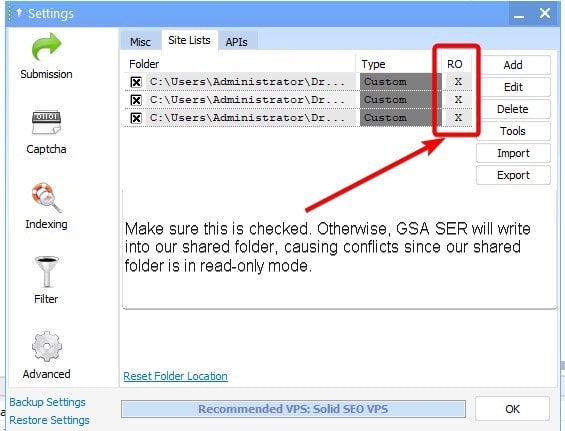

Savvy GSA SER users rarely rely on just one method. A common and effective strategy involves maintaining a scraping engine that runs 24/7, constantly harvesting fresh URLs and verifying them locally. These home-grown verified lists become an asset that rivals any commercial product, with the added benefit that you know exactly what footprints produced them. Then, you blend that with targeted real-time scraping for specific platforms that require fresh cookies or are heavily spammed. You might use a bulk purchased list exclusively for deep-tier referrer spam and social bookmark blasts, while reserving your scraped gems for Web 2.0 creations and any link that might be seen by a human reviewer. This hybrid approach balances cost, speed, and footprint diversity.

The conversation around GSA SER verified lists vs scraping will never end because both methods solve different problems. One is a tool for speed and simplicity, the other a weapon for control and longevity. By understanding the decay rate of pre-verified targets, the resource cost of harvesting your own, and the safety implications of each, you can allocate them within your tiered structure like a master architect. Use lists for the noisy, deep layers that exist only to support the next level up. Use scraping for any link that hovers within risk distance of your main asset. In the end, your GSA SER setup becomes a refinery—where stale, shared inputs are burned off in the lower tiers, and pure, unique outputs fuel the top.

click here